In the News

Cambridge Analytica Exploitation of Facebook User Data Underscores Importance of Personal Privacy

By now, even the most casual Internet users are aware of Facebook’s flat-footed response to the debacle surrounding data analytics firm Cambridge Analytica. Over 50 million Facebook users were burned when Dr. Aleksandr Kogan, a psychology professor at the University of Cambridge, not only obtained personal information from users who downloaded his Facebook app, but also harvested information about their “friends” who never agreed to share anything. That information was then distributed to both Cambridge Analytica and Eunoia Technologies.

Facebook VP & Deputy General Counsel Paul Grewal made it abundantly clear that this was by no means a data breach.

“Aleksandr Kogan requested and gained access to information from users who chose to sign up to his app, and everyone involved gave their consent. People knowingly provided their information, no systems were infiltrated, and no passwords or sensitive pieces of information were stolen or hacked,” Grewal wrote in his public explanation. He added that Dr. Aleksandr Kogan told Facebook he would use collected data for academic research, then Kogan violated established Platform Policies and passed user information to companies responsible for political, government and military work around the globe.

When Facebook learned about the violation in 2015, they removed the offending app, demanding the involved parties destroy the data and provide certification of its elimination.

“This was a breach of trust between Kogan, Cambridge Analytica and Facebook,” Zuckerberg said in a Facebook post. “We have a responsibility to protect your data, and if we can’t then we don’t deserve to serve you.”

Though Facebook is not responsible for the actions of the app creators who utilize the platform to collect user data for nefarious purposes, the social media giant is beginning to understand that basing privacy-related policies on an honor system only works until it doesn’t. Zuckerberg advised Facebook will perform a deep audit of apps which “had access to large amounts of information before we changed our platform to dramatically reduce data access in 2014.” They also plan to restrict accessible data from current and future apps, require all app creators to sign a contract to justify asking for any data they do request from users, and enable further transparency between Facebook users and their privacy settings.

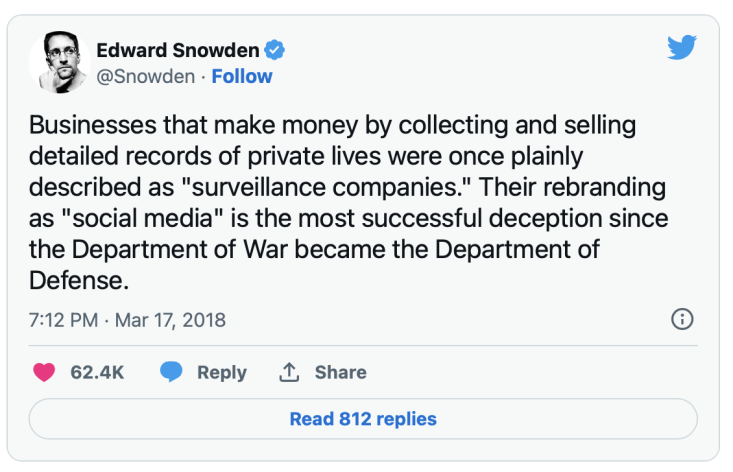

It took a whistleblower and unparalleled news coverage for Facebook to implement these changes. On social media, the user is the product; a fact Edward Snowden wasted no time cautioning Twitter about:

And after Elon Musk deleted his company Facebook pages, jokes circled the Internet about an offer to purchase the Facebook platform just so he could wipe it from existence.

“[T]his news is a reminder of the inevitable privacy risks that users face when their personal information is captured, analyzed, indefinitely stored, and shared by a constellation of data brokers, marketers, and social media companies,” notes the EFF.

This is not the first time Facebook has been in hot water over privacy concerns. As we covered in blogs before, it continues to act as a case study on privacy and trust with real-world consequences and dismaying results. Because of this, many users hopped on the #DeleteFacebook bandwagon while its market shares dived into a tailspin. This latest news only further cements the importance of selecting a provider you can trust, and in always checking the settings and reading the fine print. We can’t expect companies to protect our privacy for us, and must, as a result, take control ourselves.

If deleting your presence from Facebook is not a viable solution, a thorough audit of your own privacy settings may be in order, and implementation of privacy tools like VyprVPN can help safeguard your presence online.